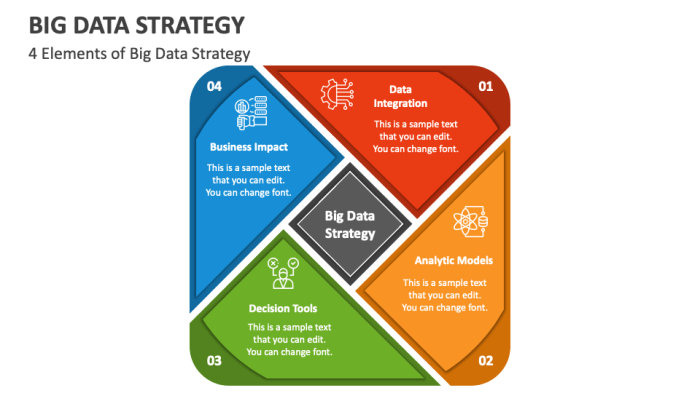

Data migration projects, while essential for business modernization and growth, often present significant challenges. Successfully navigating these complexities requires a strategic approach, and at the heart of this strategy lies the ability to accurately measure success. This necessitates the establishment of clear, quantifiable metrics that provide a data-driven understanding of project performance, enabling informed decision-making and proactive issue resolution.

This analysis explores the critical aspects of defining, measuring, and monitoring key performance indicators (KPIs) throughout the migration lifecycle. We will dissect methods for setting baselines, ensuring data quality, tracking efficiency, and optimizing resource utilization. Furthermore, we’ll examine how to incorporate user acceptance, cost analysis, and risk mitigation strategies into a robust measurement framework. The goal is to equip readers with the knowledge and tools to transform data migration from a complex undertaking into a predictable and manageable process, leading to successful outcomes.

Defining Migration Goals

Data migration projects are often complex undertakings, demanding significant resources and careful planning. Clearly defined goals are paramount to ensure a successful migration and alignment with overall business objectives. Without a well-defined roadmap, projects can easily veer off course, leading to cost overruns, delays, and ultimately, failure to realize the anticipated benefits. The following sections will Artikel how to effectively define and scope migration goals.

Common Business Objectives Driving Data Migration Projects

Data migration initiatives are frequently driven by specific business needs and strategic objectives. These objectives often intersect and influence the scope and priorities of the migration. Understanding these drivers is crucial for establishing relevant success metrics.

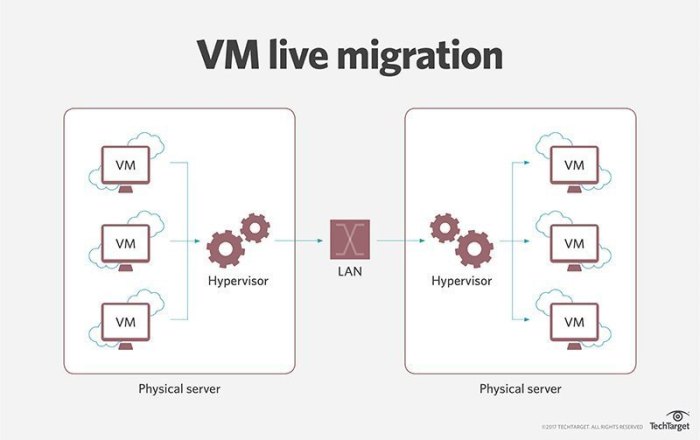

- Cost Reduction: Migrations to cloud-based platforms or more efficient storage solutions can significantly reduce operational costs. This includes expenses related to hardware, software licensing, and IT staff. For instance, a company migrating from on-premises servers to a cloud infrastructure like AWS or Azure might anticipate a 20-30% reduction in IT infrastructure costs within the first year, as observed in several case studies.

- Enhanced Agility and Scalability: Modern business environments demand flexibility. Data migration to cloud platforms allows for easier scaling of resources to meet fluctuating demands. This agility allows businesses to respond more quickly to market changes and opportunities. A SaaS provider, for example, might migrate its database to a cloud-based solution to accommodate a rapid increase in user base.

- Improved Performance: Migrations can improve data access speed, query performance, and overall system responsiveness. This is often achieved by moving data to optimized storage systems or databases. For example, migrating from a legacy database to a modern NoSQL database can result in a significant performance boost for applications that require real-time data access, as reported by companies like MongoDB.

- Data Security and Compliance: Migrations can enhance data security by moving data to more secure platforms with robust security features and compliance certifications. Compliance with regulations such as GDPR or HIPAA is often a key driver. For instance, a healthcare provider migrating patient data to a HIPAA-compliant cloud environment ensures adherence to data privacy regulations.

- Business Continuity and Disaster Recovery: Data migrations can improve business continuity by providing more reliable backup and disaster recovery solutions. Cloud platforms often offer built-in disaster recovery capabilities. A financial institution, for example, might migrate its data to a cloud platform with automated backup and failover mechanisms to ensure business operations in the event of a disaster.

- Consolidation and Modernization: Migrations frequently involve consolidating disparate data sources or modernizing legacy systems. This can streamline operations, improve data quality, and provide a unified view of data across the organization. For example, a retail company might migrate data from multiple legacy systems to a single, modern data warehouse for better customer insights.

Aligning Migration Goals with Overall Business Strategy

Data migration goals must be aligned with the broader business strategy to ensure that the migration supports the organization’s overall objectives. This alignment involves identifying the strategic priorities of the business and how the migration can contribute to achieving those priorities.

- Strategic Alignment: Begin by identifying the company’s strategic goals, such as market expansion, increased revenue, or improved customer satisfaction. Then, determine how the data migration can contribute to these goals. For instance, if the business aims to expand into a new market, a data migration that consolidates customer data and improves data analytics capabilities can facilitate informed decision-making in the new market.

- Stakeholder Engagement: Involve key stakeholders from different business units (e.g., marketing, sales, operations) in defining the migration goals. This ensures that the migration addresses the needs of all relevant departments and that the project is supported across the organization. Regular communication and feedback loops are critical for maintaining alignment.

- Prioritization: Prioritize migration goals based on their strategic importance and potential impact on the business. This involves assessing the benefits of each goal and ranking them accordingly. For example, a goal that directly contributes to revenue growth might be prioritized over a goal that primarily focuses on cost reduction, unless the cost reduction is critical to maintaining profitability.

- Metrics and Measurement: Establish clear metrics to measure the success of the migration in relation to the business strategy. For example, if the goal is to improve customer satisfaction, the metric could be a reduction in customer support tickets or an increase in Net Promoter Score (NPS).

- Examples of Strategic Alignment:

- Goal: Improve Customer Experience. Migration Action: Migrate customer data to a CRM system with enhanced analytics. Strategic Benefit: Improved customer segmentation, personalized marketing campaigns, and increased customer retention.

- Goal: Drive Operational Efficiency. Migration Action: Consolidate data from multiple legacy systems into a single data warehouse. Strategic Benefit: Streamlined reporting, reduced manual processes, and improved decision-making.

- Goal: Expand into New Markets. Migration Action: Migrate data to a cloud-based platform with global accessibility. Strategic Benefit: Scalable infrastructure, faster deployment in new regions, and improved ability to serve international customers.

Strategies for Clearly Defining the Scope and Objectives of a Migration

A well-defined scope and clear objectives are essential for a successful data migration. This involves a systematic approach to understanding the data, the systems involved, and the desired outcomes.

- Data Assessment: Conduct a thorough assessment of the existing data. This includes identifying the data sources, data types, data quality, and data volume. Data profiling tools can be used to analyze data characteristics, identify data quality issues, and assess the complexity of the migration.

- System Analysis: Analyze the source and target systems involved in the migration. This includes understanding the data models, system architectures, and integration requirements. Documenting the system dependencies and potential compatibility issues is crucial.

- Objective Definition: Define specific, measurable, achievable, relevant, and time-bound (SMART) objectives for the migration. For example, instead of “improve data quality,” a SMART objective would be “reduce data errors by 10% within six months.”

- Scope Definition: Clearly define the scope of the migration, including which data sets will be migrated, which systems will be involved, and which functionalities will be supported. Avoiding scope creep is essential, so any changes to the scope must be carefully evaluated and approved.

- Documentation: Create comprehensive documentation that Artikels the migration plan, objectives, scope, data mappings, and technical specifications. This documentation serves as a reference point for the project team and stakeholders.

- Pilot Projects: Consider conducting pilot projects to test the migration process and validate the data transformation logic. Pilot projects can help identify potential issues early on and refine the migration plan before a full-scale migration.

- Examples of Scope Definition:

- Scenario: Migrating customer data from a legacy CRM system to Salesforce. Scope: Migrate all customer records, including contact information, purchase history, and support tickets. Exclude historical email communications.

- Scenario: Migrating data from an on-premises database to a cloud-based data warehouse. Scope: Migrate all sales data, including transaction details, product information, and customer demographics. Implement data transformation and cleansing processes.

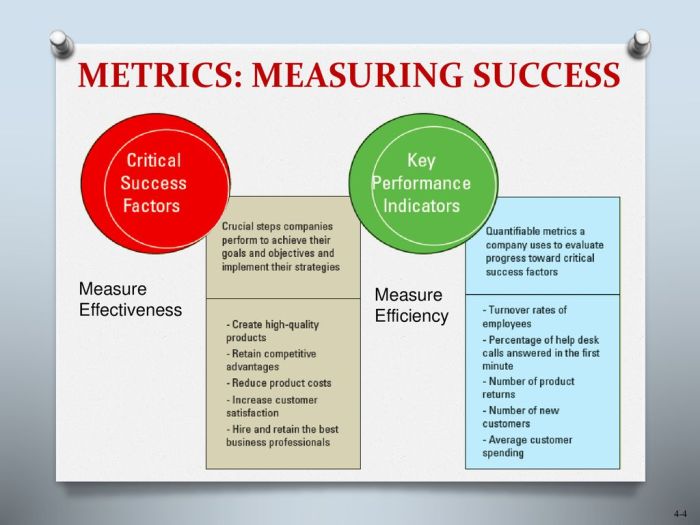

Identifying Key Performance Indicators (KPIs)

Establishing measurable success metrics for migration projects necessitates the careful selection and definition of Key Performance Indicators (KPIs). These KPIs serve as quantifiable benchmarks, allowing for objective assessment of progress, identification of potential roadblocks, and ultimately, determination of project success. This section details the selection of appropriate KPIs, focusing on their application to data accuracy, completeness, and integrity.

Types of KPIs for Migration Projects

Migration projects encompass various facets, necessitating a diverse range of KPIs. These indicators can be broadly categorized based on the aspects of the migration process they evaluate. Understanding these categories enables a more targeted and effective approach to monitoring progress.

- Project Management KPIs: These KPIs focus on the overall efficiency and effectiveness of the migration project itself. They measure aspects such as timelines, budget adherence, and resource allocation. Examples include:

- Project Completion Rate: The percentage of migration tasks completed against the total planned tasks. This directly reflects the project’s pace.

- Budget Variance: The difference between the planned budget and the actual expenditure. This indicates financial control and resource management.

- Schedule Adherence: The degree to which the project adheres to the planned timeline. It measures the difference between the planned and actual completion dates for key milestones.

- Technical KPIs: These KPIs assess the technical aspects of the migration, focusing on data transfer, system performance, and application functionality. Examples include:

- Data Transfer Rate: The speed at which data is migrated from the source to the target system, often measured in gigabytes per hour.

- Downtime: The duration the system is unavailable during the migration process. Minimizing downtime is crucial for business continuity.

- System Performance: Measures the responsiveness and efficiency of the target system after migration. Metrics include latency, throughput, and error rates.

- Data Quality KPIs: These KPIs are specifically designed to assess the accuracy, completeness, and integrity of the migrated data. These are critical to ensuring the migrated data is reliable and usable. Examples are detailed in subsequent sections.

KPIs for Data Accuracy

Data accuracy is paramount for the successful operation of any system. Inaccurate data can lead to incorrect business decisions, operational inefficiencies, and reputational damage. Therefore, meticulous monitoring of data accuracy is essential during and after the migration process.

- Data Validation Rate: This KPI measures the percentage of data records that successfully pass validation rules after migration. It directly reflects the degree to which data conforms to predefined standards.

- Error Rate: This measures the frequency of errors encountered during data migration, such as data corruption or format inconsistencies.

- Data Reconciliation Rate: This quantifies the percentage of data records that match between the source and target systems after migration. This is particularly useful when dealing with critical data sets.

- Data Transformation Accuracy: This measures the percentage of data correctly transformed from the source to the target system. This assesses the effectiveness of data mapping and transformation rules. For instance, if 10,000 records require a currency conversion and 9,950 are converted correctly, the transformation accuracy is 99.5%.

KPIs for Data Completeness

Data completeness refers to the presence of all necessary data fields within the migrated data. Incomplete data can hinder reporting, analysis, and overall system functionality. Measuring data completeness is crucial for ensuring data usability and value.

- Missing Data Rate: This KPI tracks the percentage of missing data values in critical fields after migration. This is often calculated by dividing the number of missing values by the total number of records and multiplying by 100.

- Field Population Rate: This measures the percentage of fields populated with valid data. This is particularly important for fields that are essential for system operation.

- Record Completeness Rate: This quantifies the percentage of records that meet a predefined completeness threshold, often based on the number of populated fields.

- Data Coverage: This assesses the extent to which all relevant data from the source system has been migrated to the target system. This could involve comparing the number of records, the volume of data, or the number of distinct values in a specific field. For example, if a source system contains 1,000,000 customer records and the target system only has 950,000 after migration, the data coverage is 95%.

KPIs for Data Integrity

Data integrity ensures the consistency, accuracy, and validity of data throughout its lifecycle. Maintaining data integrity is critical for data reliability and trustworthiness. Monitoring these KPIs helps ensure that the data remains consistent after migration.

- Referential Integrity Violations: This KPI tracks the number of instances where relationships between data records are broken. For example, if a foreign key references a non-existent primary key, it constitutes a violation.

- Duplicate Data Rate: This measures the percentage of duplicate records in the target system after migration. Duplicate data can skew analysis and lead to inaccurate conclusions.

- Data Consistency Rate: This assesses the degree to which data values remain consistent across different systems or databases. For example, if a customer’s address is different in two systems, it indicates a consistency issue.

- Data Type Validation: This assesses the degree to which data conforms to the defined data types in the target system. For example, if a numeric field contains text, it indicates a data type validation error.

KPI Organization Table

To effectively manage and monitor these KPIs, it’s crucial to organize them in a structured format. The following table provides a framework for tracking KPIs, including their descriptions, target values, and measurement methods.

| KPI | Description | Target | Measurement Method |

|---|---|---|---|

| Data Validation Rate | Percentage of data records that successfully pass validation rules after migration. | 99% | Automated validation scripts and data quality reports. |

| Missing Data Rate | Percentage of missing data values in critical fields after migration. | <1% | Data profiling tools and manual review of sample data. |

| Referential Integrity Violations | Number of instances where relationships between data records are broken. | 0 | Database queries and integrity checks performed on the target system. |

| Project Completion Rate | Percentage of migration tasks completed against the total planned tasks. | 100% | Project management software and regular progress reports. |

Setting Baseline Metrics

Establishing robust baseline metrics is crucial before commencing any migration project. These metrics serve as the foundation for measuring the success of the migration, providing a comparative benchmark against which post-migration performance is evaluated. Without a clear understanding of the current system’s behavior, it becomes exceedingly difficult to ascertain whether the migration has yielded the desired improvements or introduced unintended regressions.

This pre-migration analysis allows for informed decision-making throughout the process, enabling proactive identification and mitigation of potential issues.

Data Gathering Methods for Current System Performance

Several methods are employed to gather comprehensive data regarding the existing system’s performance. These methods, often used in conjunction, provide a multifaceted view of the system’s operational characteristics.

- System Monitoring Tools: Implementing existing system monitoring tools to capture data such as CPU utilization, memory consumption, disk I/O, network latency, and error rates. These tools typically provide real-time dashboards and historical data, allowing for trend analysis and identification of performance bottlenecks. For instance, a monitoring tool might reveal that a particular database server consistently experiences high CPU utilization during peak hours, indicating a potential performance issue that needs to be addressed during the migration.

- Application Performance Monitoring (APM): APM tools offer a deeper insight into application-level performance. They track transaction response times, error rates, and dependencies, allowing for the identification of slow-performing code segments or inefficient database queries. Consider an e-commerce website experiencing slow checkout processes. APM can pinpoint the exact database query or code segment responsible for the delay, facilitating optimization efforts during the migration.

- Load Testing: Simulating realistic user traffic patterns to assess the system’s ability to handle peak loads. Load testing involves generating a specified number of concurrent users and monitoring the system’s response times, throughput, and error rates. This helps to determine the system’s capacity and identify potential performance limitations. A successful migration would show the migrated system handling the same load with equal or better performance metrics.

- Database Performance Analysis: Analyzing database performance metrics, such as query execution times, indexing efficiency, and transaction throughput. This involves using database-specific tools to identify slow queries, inefficient indexes, and other performance bottlenecks. For example, identifying and optimizing a frequently executed, slow-performing query before the migration can significantly improve the performance of the migrated application.

- Network Performance Measurement: Measuring network latency, bandwidth utilization, and packet loss to assess the network’s impact on application performance. This can involve using tools to monitor network traffic and identify any bottlenecks or performance limitations.

Tools Used for Performance Measurement Before Migration

A variety of tools are available to facilitate the collection and analysis of performance data prior to migration. The selection of tools depends on the specific technology stack and the depth of analysis required.

- Operating System Monitoring Tools: Tools native to the operating system, such as `top`, `vmstat`, `iostat` (Linux/Unix), and Performance Monitor (Windows), provide real-time and historical data on CPU, memory, disk I/O, and other system-level metrics.

- Application Performance Monitoring (APM) Tools: Commercial APM tools, such as Dynatrace, New Relic, AppDynamics, and Datadog, offer comprehensive application-level performance monitoring, including transaction tracing, code-level profiling, and error analysis.

- Network Monitoring Tools: Tools like Wireshark, tcpdump, and SolarWinds Network Performance Monitor (NPM) are used to capture and analyze network traffic, identify latency, and monitor bandwidth utilization.

- Database Performance Monitoring Tools: Database-specific tools, such as Oracle Enterprise Manager, SQL Server Management Studio, and pgAdmin (for PostgreSQL), provide insights into database performance, including query execution plans, index usage, and transaction throughput.

- Load Testing Tools: Tools such as Apache JMeter, Gatling, and LoadRunner simulate user traffic and measure system performance under load.

- Log Analysis Tools: Tools such as the ELK stack (Elasticsearch, Logstash, Kibana), Splunk, and Graylog are used to collect, analyze, and visualize log data, providing insights into application behavior and error patterns.

Data Quality Measurement

Data quality is paramount during any migration process. Ensuring data accuracy, completeness, consistency, and validity is crucial for the successful transition of business operations and decision-making capabilities. Neglecting data quality can lead to inaccurate reporting, flawed analytics, and ultimately, compromised business outcomes. Therefore, establishing rigorous measurement techniques and monitoring procedures is essential to mitigate risks and guarantee data integrity throughout the migration lifecycle.

Data Quality Dimensions and Assessment Techniques

Data quality assessment necessitates a multifaceted approach, evaluating various dimensions to ensure the reliability and usability of migrated data. Each dimension requires specific measurement techniques and predefined thresholds to identify and address potential issues proactively.

| Data Quality Dimension | Measurement Technique | Acceptable Threshold | Example/Explanation |

|---|---|---|---|

| Accuracy |

| < 1% error rate (or as defined by business requirements) | For example, a validation rule could reject any email address that doesn’t contain an “@” symbol. Data profiling could identify unusual salary values outside a reasonable range. Manual review could involve checking a random sample of customer addresses for accuracy. |

| Completeness |

| > 99% completeness (or as defined by business requirements) | If a customer’s address field is frequently empty, it indicates incomplete data. Comparing the total number of customer records in the source and target databases can reveal missing records. |

| Consistency |

| < 2% inconsistency rate (or as defined by business requirements) | If a customer’s address is listed differently in the sales and billing systems, it indicates inconsistency. Referential integrity checks ensure that orders reference existing customer IDs. |

| Validity |

| < 1% invalid data (or as defined by business requirements) | For example, ensuring that a “salary” field contains numeric data only. Range checks can ensure that ages are within a reasonable range (e.g., 0-120). Format checks can validate phone numbers against a specific format. |

Measuring Migration Speed and Efficiency

Accurately assessing the speed and efficiency of data migration is critical for project success. These metrics allow for proactive identification of bottlenecks, optimization of resource allocation, and informed decision-making throughout the migration lifecycle. Careful measurement provides a basis for evaluating the performance of the migration strategy and for making adjustments to maintain timelines and budget constraints.

Metrics for Tracking Data Migration Speed

Tracking the speed of data migration involves quantifying the rate at which data is transferred and processed. Several key metrics are crucial for monitoring this aspect of the migration.

- Data Transfer Rate: This metric quantifies the volume of data moved per unit of time, typically measured in gigabytes per hour (GB/hr) or terabytes per day (TB/day). This is a fundamental indicator of migration speed.

- Record Processing Rate: This metric measures the number of individual records or objects processed per unit of time. It’s particularly relevant when migrating systems with a high volume of individual data elements, such as customer records or transactions.

- Downtime: The duration of system unavailability during the migration process is critical. This metric is typically measured in hours or minutes and directly impacts business operations.

- Latency: This refers to the delay between the initiation of a data transfer and its completion. High latency can significantly impact migration speed, particularly in geographically distributed environments.

- Percentage of Data Migrated: Tracking the cumulative progress of the data migration, expressed as a percentage, provides an overall view of the project’s advancement.

- Error Rate: The frequency of errors encountered during the migration process, measured as a percentage or a rate per unit of data, is a crucial indicator of data integrity and the effectiveness of the migration strategy.

Factors Influencing Migration Efficiency

Numerous factors can influence the efficiency of the data migration process. Understanding these elements allows for proactive mitigation strategies to maintain optimal performance.

- Network Bandwidth: The available network bandwidth directly impacts the data transfer rate. Insufficient bandwidth can significantly slow down the migration.

- Hardware Performance: The processing power, storage capacity, and I/O performance of both the source and target systems influence migration speed.

- Data Volume and Complexity: The total amount of data to be migrated and the complexity of the data structures affect the processing time and the overall migration duration.

- Migration Tool Capabilities: The features, performance, and scalability of the migration tools used have a direct impact on efficiency.

- Data Transformation Requirements: Complex data transformations and cleansing processes add to the processing time and can affect the overall migration efficiency.

- Database Performance: The performance of both the source and target databases affects the speed at which data can be read and written. Database configuration and optimization are crucial.

- Concurrency: The number of concurrent processes or threads used during the migration can affect the performance. Too many concurrent processes can lead to resource contention, while too few can underutilize available resources.

- Source System Load: The load on the source system during the migration can impact its performance and affect the overall speed.

Techniques to Optimize Migration Speed and Efficiency

Several techniques can be employed to optimize data migration speed and efficiency, leading to a smoother and more successful project.

- Optimize Network Infrastructure: Ensure sufficient network bandwidth is available and optimized for data transfer. Consider using network optimization techniques such as Quality of Service (QoS) to prioritize migration traffic.

- Hardware Upgrades: Upgrade the hardware on the source and target systems, including servers, storage, and network components, to improve performance.

- Parallel Processing: Implement parallel processing techniques to migrate data in multiple streams simultaneously, reducing the overall migration time.

- Data Compression: Compress data before transfer to reduce the amount of data transmitted over the network.

- Incremental Migration: Implement an incremental migration strategy, where only changed data is migrated periodically, minimizing downtime and reducing the overall data transfer volume.

- Data Chunking: Divide large datasets into smaller chunks to improve processing efficiency and reduce the impact of individual errors.

- Database Optimization: Optimize the database configurations and performance on both the source and target systems, including indexing, query optimization, and storage configuration.

- Tool Selection and Optimization: Choose the right migration tools and optimize their configuration and settings for the specific migration requirements.

- Pre-Migration Data Cleansing: Cleanse and transform data before migration to reduce the amount of data to be transferred and improve the quality of the data in the target system.

- Monitor and Tune: Continuously monitor the migration process and make adjustments to the configuration and resource allocation to optimize performance.

Resource Utilization Tracking

Effective resource utilization tracking is critical during a migration to ensure the process remains cost-effective and performs optimally. Monitoring resource consumption allows for proactive adjustments, preventing bottlenecks, and optimizing the allocation of resources. This proactive approach helps minimize unnecessary expenditures and maximizes the efficiency of the migration effort.

Measuring Resource Utilization During Migration

Accurate measurement of resource utilization necessitates a multi-faceted approach, encompassing various aspects of the infrastructure involved in the migration process. Comprehensive monitoring provides insights into resource demands and performance characteristics.

- Server Capacity: Monitoring server capacity involves tracking CPU usage, memory consumption, disk I/O, and network bandwidth utilization. Tools such as `top`, `htop` (for Linux), and Performance Monitor (for Windows) provide real-time insights. Analyzing historical data allows for identifying trends and predicting future resource needs. For instance, an upward trend in CPU usage on a source server during data extraction indicates a potential need for increased compute resources on the target environment.

- Bandwidth Consumption: Bandwidth usage should be closely monitored to prevent network congestion and ensure timely data transfer. Tools like `iftop`, `nload` (Linux), and built-in network monitoring tools in operating systems help in tracking network traffic. Analyzing the rate of data transfer, identifying peak usage times, and understanding the types of data being transferred (e.g., large files, database dumps) is essential.

- Storage I/O: Monitoring disk I/O operations is crucial for identifying storage bottlenecks. Metrics such as read/write speeds, queue length, and latency provide insights into storage performance. Tools like `iostat` (Linux) and Performance Monitor (Windows) can be used to track these metrics. High latency or a long queue length can indicate storage performance issues that may need to be addressed by optimizing storage configurations.

- Database Resources: Database migrations often require careful monitoring of database server resources, including CPU, memory, and disk I/O. Database-specific monitoring tools, such as those provided by the database vendor (e.g., Oracle Enterprise Manager, SQL Server Management Studio), should be employed. Monitoring database performance during the migration can identify potential issues such as slow query execution, which can impact the migration speed.

Optimizing Resource Allocation to Minimize Costs

Optimizing resource allocation is essential for controlling costs and ensuring efficient use of resources during the migration. This involves strategic planning, real-time monitoring, and the ability to adjust resource allocations dynamically.

- Right-Sizing Instances: Analyze the resource utilization of the source environment to determine the appropriate instance sizes for the target environment. Over-provisioning leads to unnecessary costs, while under-provisioning can cause performance bottlenecks. Tools that provide resource utilization data, such as cloud provider monitoring dashboards, can help to identify resource requirements accurately.

- Automated Scaling: Implement automated scaling mechanisms to dynamically adjust resources based on demand. Cloud providers offer auto-scaling features that can automatically add or remove resources (e.g., virtual machines, database instances) based on predefined metrics (e.g., CPU utilization, network traffic). This ensures that resources are available when needed, while also minimizing costs during periods of low demand.

- Data Compression: Implement data compression techniques during data transfer to reduce bandwidth consumption and storage requirements. Tools like `gzip`, `bzip2`, or more advanced compression algorithms can be used. The optimal compression method will depend on the data type and the available resources.

- Parallel Processing: Employ parallel processing techniques to accelerate data transfer and processing. Breaking down large migration tasks into smaller, parallel tasks can significantly reduce the overall migration time. For example, migrating multiple databases simultaneously can be much faster than migrating them sequentially.

- Optimizing Database Queries: For database migrations, optimize database queries to improve performance. Slow queries can consume significant resources and delay the migration process. Use database performance monitoring tools to identify and optimize slow-running queries.

Resource Utilization Metrics Table

The following table illustrates example resource utilization metrics, target values, and actual values. This is a sample representation; actual values will vary based on the specific migration and environment.

| Resource Metric | Target Value | Actual Value | Status |

|---|---|---|---|

| CPU Utilization (Source Server) | < 80% | 75% | Within Target |

| Bandwidth Consumption (Data Transfer) | < 500 Mbps | 450 Mbps | Within Target |

| Disk I/O Latency (Target Server) | < 10 ms | 12 ms | Needs Attention |

| Memory Utilization (Target Database) | < 70% | 68% | Within Target |

User Acceptance and Satisfaction

Measuring user acceptance and satisfaction is crucial for determining the success of a system migration. A system can function perfectly from a technical standpoint, but if users are dissatisfied or unwilling to adopt it, the migration will be deemed a failure. This section focuses on methods for assessing user acceptance and gathering feedback to improve the migrated system.

Methods for Measuring User Acceptance

User acceptance is a multifaceted concept and cannot be measured through a single metric. Instead, a combination of quantitative and qualitative methods is necessary to gain a comprehensive understanding.

- Usage Rate Analysis: Tracking the frequency and duration of user interactions with the new system provides a quantitative measure of adoption. Increased usage over time indicates growing acceptance. For example, if a migrated CRM system shows a 30% increase in daily active users within the first month, it suggests positive user adoption.

- Task Completion Rates: Monitoring the successful completion of key tasks within the new system reveals its usability and efficiency. A higher task completion rate signifies that users can effectively navigate and utilize the system to achieve their goals. This is often compared to the completion rate before migration.

- Error Rate Analysis: Analyzing the frequency of errors encountered by users provides insight into the system’s stability and ease of use. A decrease in error rates post-migration suggests improved user experience. For instance, if the number of help desk tickets related to a specific function decreases after the migration, it indicates better usability.

- User Feedback Analysis: Gathering and analyzing user feedback through surveys, interviews, and focus groups offers qualitative insights into user perceptions, concerns, and suggestions. This method provides a deeper understanding of user satisfaction and areas needing improvement.

- Training and Support Ticket Analysis: Tracking the volume and type of training requests and support tickets provides insight into the learning curve and areas where users are struggling. A decrease in these requests over time often indicates increased familiarity and acceptance.

Methods for Gathering User Feedback

Gathering user feedback is essential for understanding user experiences and identifying areas for improvement. Several methods can be employed to collect valuable insights.

- Surveys: Surveys are a structured and efficient way to collect quantitative and qualitative data from a large user base. Surveys can be distributed electronically, making them easily accessible and scalable.

- Interviews: One-on-one interviews provide an opportunity for in-depth conversations with users, allowing for a more nuanced understanding of their experiences and perspectives. Interviews are particularly useful for exploring complex issues or gathering detailed feedback on specific aspects of the system.

- Focus Groups: Focus groups involve a moderated discussion with a small group of users, providing a platform for collaborative feedback and identifying common themes and concerns. Focus groups are beneficial for exploring user perceptions and identifying areas of consensus or disagreement.

- Usability Testing: Usability testing involves observing users as they interact with the system to perform specific tasks. This method provides direct insights into usability issues and areas where users struggle.

- Feedback Forms: Implementing in-system feedback forms or dedicated email addresses allows users to provide feedback at any time. This method provides a continuous stream of user input and helps to identify emerging issues.

Survey Design for User Feedback

Designing a well-structured survey is critical for gathering meaningful user feedback. The survey should be concise, clear, and focused on relevant aspects of the system.

- Define Objectives: Clearly define the goals of the survey. What specific information are you trying to gather?

- Target Audience: Identify the target audience for the survey. Tailor the questions to their specific roles and responsibilities.

- Question Types: Use a mix of question types, including:

- Multiple Choice: For gathering categorical data (e.g., “How satisfied are you with the system’s performance? (Very Satisfied, Satisfied, Neutral, Dissatisfied, Very Dissatisfied)”)

- Rating Scales (Likert Scales): For measuring attitudes and opinions (e.g., “The new system is easy to use.” (Strongly Agree, Agree, Neutral, Disagree, Strongly Disagree))

- Open-ended Questions: For gathering qualitative data and allowing users to provide detailed feedback (e.g., “What are the three most significant improvements in the new system compared to the old one?”)

- Question Examples:

- Ease of Use: “How easy is it to navigate the new system?”

- Functionality: “Are the new features meeting your needs?”

- Performance: “How would you rate the speed and responsiveness of the new system?”

- Training and Support: “Was the training provided sufficient to use the new system?”

- Overall Satisfaction: “Overall, how satisfied are you with the new system?”

- Open-Ended Question: “What aspects of the new system do you find most challenging?”

- Survey Length: Keep the survey concise to maximize response rates.

- Pilot Testing: Test the survey with a small group of users before distributing it to the entire user base.

- Data Analysis: Plan how you will analyze the survey data before distributing the survey.

Cost Tracking and Analysis

Effective cost tracking and analysis are crucial for ensuring a migration project remains within budget and delivers the expected return on investment. This involves meticulously monitoring all expenses, comparing them against the planned budget, and identifying any variances that require corrective action. A robust cost management strategy allows for informed decision-making throughout the migration lifecycle, preventing cost overruns and optimizing resource allocation.

Methods for Tracking and Analyzing Migration Costs

Several methods facilitate the tracking and analysis of migration costs, ensuring comprehensive oversight of financial aspects. Implementing these methods provides the necessary data for accurate financial management and informed decision-making.

- Detailed Budgeting: Develop a comprehensive budget outlining all anticipated costs, categorized for clarity. This serves as the baseline for comparison and helps identify potential cost drivers.

- Regular Cost Reporting: Generate periodic reports that compare actual expenditures with the budgeted amounts. These reports should highlight variances and provide explanations for any discrepancies.

- Cost Breakdown Structure (CBS): Employ a CBS to categorize project costs hierarchically. This allows for detailed tracking and analysis of expenses at different levels, from individual tasks to overall project phases.

- Variance Analysis: Conduct regular variance analysis to identify and explain differences between planned and actual costs. Investigate significant variances to understand the underlying causes and implement corrective actions.

- Resource Allocation Tracking: Monitor the allocation and utilization of resources (personnel, infrastructure, tools) to ensure efficient cost management. This includes tracking labor hours, infrastructure usage, and tool licensing fees.

- Forecasting: Use historical data and current trends to forecast future costs. This allows for proactive budget adjustments and helps prevent cost overruns.

- Cost Optimization Strategies: Identify and implement cost optimization strategies, such as negotiating favorable vendor contracts, optimizing resource utilization, and automating tasks.

Key Cost Components of a Migration Project

Understanding the key cost components of a migration project is essential for accurate budgeting and effective cost control. These components represent the major areas where expenses are incurred.

- Assessment and Planning: Costs associated with assessing the existing environment, planning the migration strategy, and developing a detailed project plan. This includes costs for consultants, tools, and internal resources.

- Infrastructure Costs: Expenses related to the infrastructure required for the new environment, including servers, storage, network equipment, and cloud services.

- Migration Tools and Software: Costs for purchasing or licensing migration tools, software, and utilities necessary for data transfer, application compatibility, and other migration-related tasks.

- Labor Costs: Salaries and benefits for internal staff, contractors, and consultants involved in the migration project. This is often a significant cost component.

- Data Migration Costs: Expenses related to the actual data migration process, including data cleansing, transformation, and transfer.

- Application Remediation: Costs associated with modifying applications to ensure compatibility with the new environment, including code refactoring, testing, and deployment.

- Training and Support: Expenses for training staff on the new environment and providing ongoing support during and after the migration.

- Testing and Quality Assurance: Costs for testing the migrated systems to ensure they function correctly and meet performance requirements.

- Contingency: A buffer for unforeseen expenses and risks. This is essential for mitigating potential cost overruns.

Cost Categories, Planned Costs, and Actual Costs

A structured table provides a clear overview of cost categories, planned costs, and actual costs, facilitating comparison and variance analysis. This allows stakeholders to quickly assess the financial performance of the migration project.

| Cost Category | Planned Costs | Actual Costs | Variance |

|---|---|---|---|

| Assessment and Planning | $50,000 | $55,000 | +$5,000 |

| Infrastructure Costs | $200,000 | $190,000 | -$10,000 |

| Migration Tools | $25,000 | $28,000 | +$3,000 |

| Labor Costs | $150,000 | $160,000 | +$10,000 |

| Data Migration | $75,000 | $70,000 | -$5,000 |

| Application Remediation | $60,000 | $65,000 | +$5,000 |

| Training and Support | $30,000 | $32,000 | +$2,000 |

| Testing and QA | $40,000 | $40,000 | $0 |

| Contingency | $20,000 | $20,000 | $0 |

| Total | $650,000 | $660,000 | +$10,000 |

Risk Assessment and Mitigation Metrics

Migration projects, inherently complex endeavors, are susceptible to various risks that can impede progress, increase costs, and compromise the overall success. Establishing robust risk assessment and mitigation strategies is paramount to minimizing potential disruptions and ensuring a smooth transition. Measuring the effectiveness of these strategies requires a systematic approach that quantifies the impact of identified risks and the efficacy of the implemented countermeasures.

This section focuses on the critical metrics necessary for assessing and managing migration risks effectively.

Measuring the Effectiveness of Risk Mitigation Strategies

Assessing the effectiveness of risk mitigation strategies requires a proactive and data-driven approach. This involves defining metrics that track the frequency and severity of identified risks, coupled with an evaluation of the implemented mitigation plans. The following elements are essential for this evaluation:

- Risk Occurrence Rate: This metric quantifies the frequency with which identified risks materialize during the migration. It is calculated as:

(Number of Risks Occurring / Total Number of Risks Identified)

– 100A lower risk occurrence rate indicates that the mitigation strategies are effectively preventing or reducing the impact of identified risks.

- Risk Severity Reduction: Evaluate how much the severity of a risk has been reduced after mitigation. This can be determined by comparing the potential impact of a risk before and after mitigation. This can be done through:

- Impact Analysis: Analyze the impact of a risk before and after mitigation. The potential impact of each risk can be assessed using a risk matrix that considers both the probability of occurrence and the severity of the impact (e.g., financial loss, data loss, downtime).

The risk matrix should be updated after mitigation strategies are implemented.

- Severity Scoring: Assign numerical scores to the severity of the impact (e.g., 1-5, where 1 is negligible and 5 is catastrophic). Calculate the average severity score before and after mitigation.

A significant reduction in severity scores indicates that the mitigation strategies are effective.

- Impact Analysis: Analyze the impact of a risk before and after mitigation. The potential impact of each risk can be assessed using a risk matrix that considers both the probability of occurrence and the severity of the impact (e.g., financial loss, data loss, downtime).

- Mitigation Cost vs. Benefit: Track the cost associated with implementing each mitigation strategy and compare it to the benefits realized. The benefits can be quantified by:

- Reduced Downtime: Measuring the reduction in downtime (in hours or days) resulting from the mitigation strategy.

- Cost Savings: Calculating the financial savings resulting from avoiding or minimizing the impact of the risk (e.g., reduced recovery costs, avoided fines).

This analysis helps to ensure that resources are allocated effectively to the most impactful mitigation strategies.

- Time to Resolution: Measure the time taken to resolve incidents that arise despite the implemented mitigation strategies. A shorter time to resolution indicates that the response and recovery plans are effective. This can be measured as:

(Time of Incident Resolution – Time of Incident Detection)

Methods for Tracking the Number of Incidents During the Migration

Tracking the number of incidents during a migration is crucial for identifying potential weaknesses in the migration plan and assessing the effectiveness of risk mitigation measures. Incident tracking should encompass various aspects of the migration process, including data migration, application deployment, and infrastructure configuration. The following methods are essential for comprehensive incident tracking:

- Incident Logging System: Implement a centralized incident logging system to record all incidents that occur during the migration. The system should capture detailed information about each incident, including:

- Incident Description: A clear and concise description of the incident.

- Date and Time: The date and time the incident occurred.

- Severity Level: The severity level of the incident (e.g., critical, major, minor).

- Affected Component: The component or system affected by the incident.

- Root Cause: The root cause of the incident.

- Resolution Steps: The steps taken to resolve the incident.

- Resolution Date and Time: The date and time the incident was resolved.

- Assigned Personnel: The personnel assigned to address the incident.

- Incident Categorization: Categorize incidents based on their type (e.g., data corruption, application failure, network outage, security breach). This categorization allows for identifying trends and patterns in incident occurrences.

- Incident Reporting: Establish a process for generating regular incident reports. These reports should summarize the number of incidents, their severity, their root causes, and the time taken to resolve them. The reports should be shared with relevant stakeholders, including project managers, migration teams, and senior management.

- Incident Analysis: Conduct regular incident analysis to identify the root causes of incidents and implement corrective actions. The analysis should involve a thorough review of incident data, including logs, reports, and any other relevant information. The goal of the analysis is to prevent similar incidents from occurring in the future.

Common Migration Risks and Their Associated Metrics

Identifying and monitoring common migration risks is essential for proactive risk management. The following list details common migration risks and their associated metrics, enabling effective monitoring and mitigation:

- Data Loss or Corruption:

- Metric: Number of data loss incidents, percentage of data corruption, data integrity validation failures.

- Explanation: Data loss can occur due to various reasons, including errors during data transfer, software bugs, or hardware failures. Data corruption compromises data integrity. Metrics include the number of data loss incidents, the percentage of data corrupted, and the number of failures during data integrity validation checks.

- Example: During a database migration, if 100,000 records are transferred and 50 records are found to be corrupted, the data corruption rate is 0.05%. If data integrity validation fails on 10 out of 1000 validation checks, the failure rate is 1%.

- Application Downtime:

- Metric: Total application downtime (hours), average downtime per application, percentage of planned downtime exceeded.

- Explanation: Application downtime can result from various factors, including application compatibility issues, network outages, or server failures. The metrics track the total downtime, the average downtime per application, and the percentage of planned downtime that was exceeded.

- Example: If a critical application is down for 2 hours during a migration, and the planned downtime was 1 hour, the downtime exceeds the plan by 100%.

- Network Connectivity Issues:

- Metric: Number of network outages, average network latency, packet loss rate.

- Explanation: Network connectivity problems can disrupt data transfer, application access, and overall migration progress. The metrics include the number of network outages, average network latency, and the packet loss rate.

- Example: If the average network latency increases from 50ms to 200ms during the migration, it indicates potential network congestion or performance issues.

- Security Breaches:

- Metric: Number of security incidents, unauthorized access attempts, data breach incidents.

- Explanation: Security breaches can compromise sensitive data and disrupt the migration process. The metrics include the number of security incidents, unauthorized access attempts, and data breach incidents.

- Example: If there are 3 unauthorized access attempts to the migrated data within a week, this raises concerns about the security posture of the migration.

- Cost Overruns:

- Metric: Percentage of budget exceeded, actual cost vs. planned cost.

- Explanation: Cost overruns can arise from unforeseen issues, increased scope, or delays. The metrics include the percentage of budget exceeded and the actual cost compared to the planned cost.

- Example: If the migration project was budgeted at $1 million and the final cost is $1.2 million, the cost overrun is 20%.

- Performance Degradation:

- Metric: Application response time, database query performance, server CPU utilization, server memory utilization.

- Explanation: Performance degradation can impact user experience and application efficiency. The metrics include application response time, database query performance, and server resource utilization (CPU and memory).

- Example: If the average response time for a critical application increases from 1 second to 5 seconds after migration, it indicates performance degradation.

- Data Migration Delays:

- Metric: Data migration completion rate, number of delayed data transfers, the average time to transfer data.

- Explanation: Delays in data migration can impact the overall project timeline. Metrics include the rate at which data migration is completed, the number of delayed data transfers, and the average time required to transfer data.

- Example: If the data migration is only 50% complete by the planned completion date, the migration is significantly delayed. If the average time to transfer a terabyte of data increased from 2 hours to 6 hours, it indicates issues.

- User Adoption Issues:

- Metric: User acceptance rate, the number of user support tickets related to migration, user satisfaction scores.

- Explanation: Issues with user adoption can hinder the successful migration. Metrics include user acceptance rates, the number of user support tickets related to the migration, and user satisfaction scores.

- Example: If only 60% of users are actively using the migrated system a month after the migration, it indicates potential adoption issues.

Post-Migration Performance Monitoring

Post-migration performance monitoring is a critical phase that ensures the success of the migration process. It involves continuous assessment of the migrated system’s performance, identifying any issues, and optimizing the system to meet the defined goals. This proactive approach allows for timely intervention, preventing potential problems from escalating and ensuring the stability and efficiency of the new environment.

Establishing Continuous Monitoring

Establishing continuous monitoring necessitates the implementation of robust monitoring tools and processes. These tools collect data on various aspects of the system’s performance, providing insights into its behavior and identifying potential areas for improvement. The data collected should be analyzed regularly to detect anomalies and trends.

- Selecting Monitoring Tools: The selection of appropriate monitoring tools is crucial for effective post-migration performance analysis. These tools should be capable of monitoring various aspects of the system, including:

- Application Performance Monitoring (APM) tools: Such as AppDynamics, New Relic, or Dynatrace, provide detailed insights into application performance, including response times, error rates, and transaction throughput.

- Infrastructure Monitoring tools: Tools like Prometheus, Grafana, or Nagios, monitor the underlying infrastructure, including servers, networks, and storage, tracking resource utilization, latency, and availability.

- Log Management tools: Tools like Splunk, ELK Stack (Elasticsearch, Logstash, Kibana), or Sumo Logic, collect, store, and analyze logs generated by applications and infrastructure components. This allows for identifying errors, security incidents, and performance bottlenecks.

- Defining Monitoring Metrics: Specific metrics must be defined to assess the system’s performance effectively. These metrics should align with the pre-defined KPIs and migration goals.

- Application Performance Metrics: Examples include response time, transaction throughput, error rates, and resource consumption (CPU, memory, disk I/O).

- Infrastructure Performance Metrics: Examples include server CPU utilization, memory usage, network latency, and storage I/O.

- Business Metrics: Examples include conversion rates, revenue generated, and user engagement.

- Setting up Alerts and Notifications: Alerts and notifications must be configured to notify the relevant teams of any critical issues or anomalies. These alerts should be based on predefined thresholds for each metric.

- Threshold-based alerts: Triggered when a metric exceeds a predefined threshold (e.g., CPU utilization exceeding 90%).

- Anomaly detection alerts: Identify unusual patterns or deviations from the baseline performance.

- Automating Monitoring and Reporting: Automating the monitoring and reporting process is crucial for efficiency and consistency. This involves scripting and integrating monitoring tools to collect data, generate reports, and trigger alerts automatically.

Examples of Post-Migration Performance Reports

Post-migration performance reports provide a comprehensive overview of the system’s performance after migration. These reports are essential for identifying issues, tracking progress, and making informed decisions. The format and content of these reports can vary depending on the specific requirements and the type of migration.

- Application Performance Report: This report focuses on the performance of the migrated applications. It includes metrics such as response times, error rates, transaction throughput, and resource utilization.

- Example: A report might show that the average response time for a specific web application has increased by 15% after migration. Further investigation reveals a database bottleneck, leading to optimization efforts.

- Infrastructure Performance Report: This report provides insights into the performance of the underlying infrastructure. It includes metrics such as server CPU utilization, memory usage, network latency, and storage I/O.

- Example: A report might highlight that a particular server is experiencing high CPU utilization, indicating a potential need for scaling or optimization.

- Business Impact Report: This report focuses on the impact of the migration on business metrics, such as conversion rates, revenue generated, and user engagement.

- Example: A report might show a decrease in website conversion rates after migration. Further analysis reveals issues with the checkout process, which are then addressed.

- Security and Compliance Report: This report assesses the security posture of the migrated system and ensures compliance with relevant regulations. It includes metrics such as security incident counts, vulnerability assessments, and compliance status.

- Example: A report might reveal an increase in security incident attempts after migration, prompting a review of security configurations and threat detection mechanisms.

Template for a Post-Migration Performance Report

A well-structured post-migration performance report should include key metrics, analysis sections, and actionable recommendations. This template provides a framework for creating a comprehensive report.

| Section | Description | Metrics | Analysis | Recommendations |

|---|---|---|---|---|

| Executive Summary | Brief overview of the migration and its performance. | N/A | Highlights of key findings. | Key recommendations and actions. |

| Application Performance | Performance of the migrated applications. |

|

|

|

| Infrastructure Performance | Performance of the underlying infrastructure. |

|

|

|

| Business Impact | Impact of the migration on business metrics. |

|

|

|

| Security and Compliance | Security posture and compliance status. |

|

|

|

| Cost Analysis | Tracking and analysis of migration costs. |

|

|

|

| Risk Assessment | Ongoing risk assessment and mitigation. |

|

|

|

Final Conclusion

In conclusion, the effective measurement of success in data migration is not merely a technical requirement; it’s a fundamental driver of project success. By diligently defining goals, establishing baselines, tracking KPIs across all phases, and actively soliciting user feedback, organizations can gain unparalleled control over their migration initiatives. This analytical framework ensures that data migrations are not just completed, but that they deliver tangible business value, minimizing risks, optimizing resources, and ultimately, contributing to long-term organizational goals.

Continuous monitoring and reporting further solidify the process, ensuring sustainable improvements and providing actionable insights for future projects.

Common Queries

What is the most critical KPI to track during a migration?

While the most critical KPI varies based on project goals, data accuracy and completeness are generally considered paramount. These metrics directly impact the usability and reliability of the migrated data, influencing downstream business processes.

How often should KPIs be reviewed during a migration?

KPIs should be reviewed regularly, ideally daily or weekly, depending on the migration phase and project complexity. More frequent reviews are essential during critical migration stages or when significant issues arise.

What tools can be used to measure data accuracy?

Data accuracy can be measured using a combination of tools, including data profiling software, automated validation scripts, and manual data review. These tools help identify inconsistencies, errors, and missing data elements.

How do you handle discrepancies in data during migration?

Discrepancies should be handled through a combination of data cleansing, transformation, and reconciliation processes. Establish clear procedures for identifying, documenting, and resolving data inconsistencies before and during the migration.

What are the key components of a post-migration performance report?

A post-migration performance report should include key metrics such as data accuracy, system performance (response times, uptime), user satisfaction, and cost analysis. It should also contain an analysis of the results, including any deviations from the baseline and recommendations for improvement.